PI: Zheng Chen, Linköping University co-PI: Michael Lentmaier, Lund University

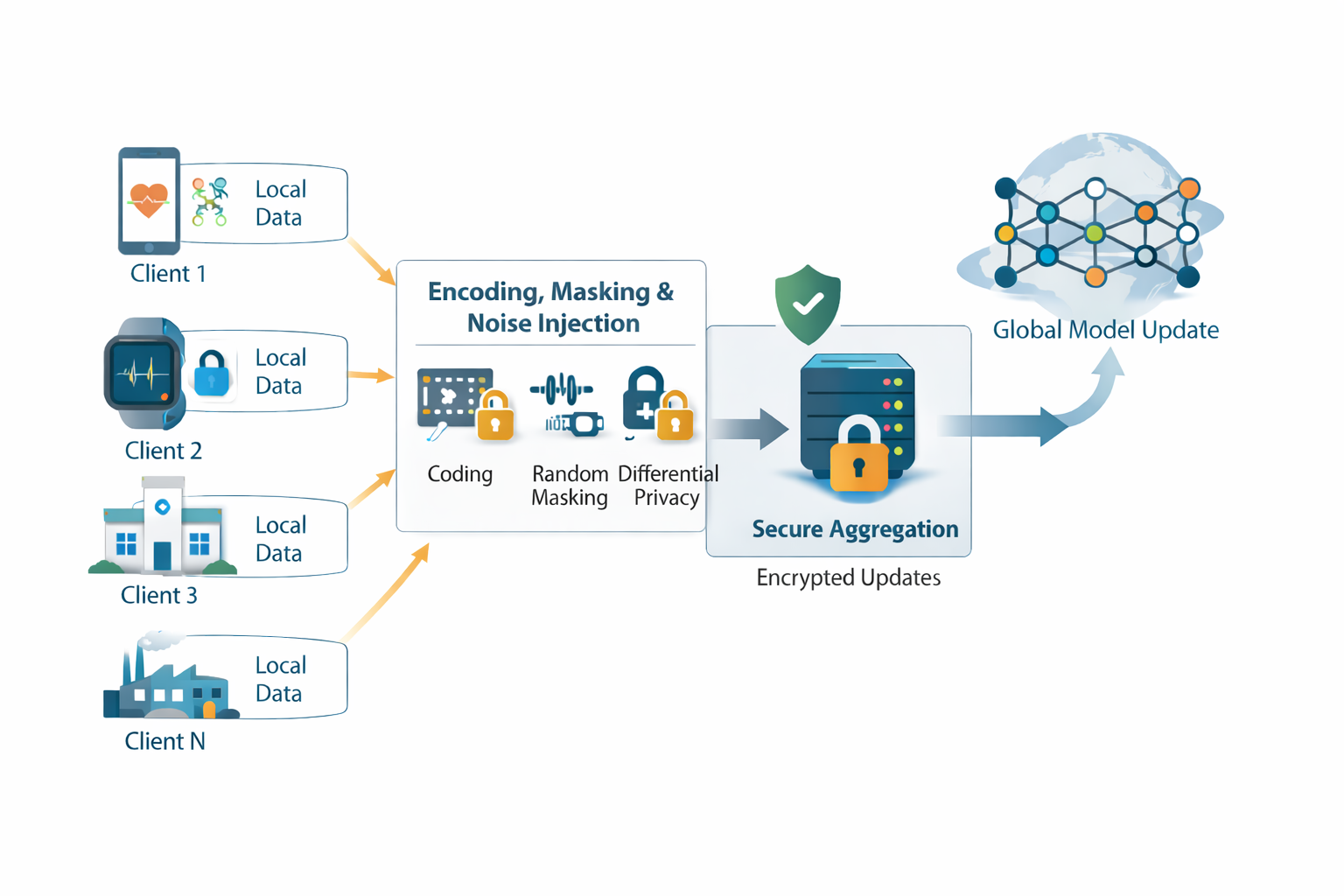

Federated learning (FL) is a distributed learning paradigm where multiple agents collaborate to solve a common learning task by conducting local training and exchanging model updates with a parameter server. Even though local datasets are kept private, sensitive information can still be inferred from the exchanged model updates, for example through membership inference attacks. Among the various strategies proposed to address privacy concerns in FL, two approaches have garnered significant attention: secure aggregation based on random masking and secret sharing, and differential privacy achieved by injecting random artificial noise. This project aims to develop novel techniques to achieve privacy protection in FL with minimal utility degradation, and re-examine the communication-privacy-utility interplay in differentially private FL with practical coding schemes.

Project number: G3