Focus Period lund 2026

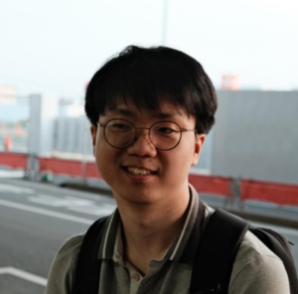

PhD Student

Kim Jaechul Graduate School of AI – KAIST (South Korea)

Jaeyoung Cha is a third-year PhD student at the Kim Jaechul Graduate School of AI, KAIST, advised by Chulhee “Charlie” Yun (OptiML Lab). His research focuses on the theoretical foundations of modern deep learning, with particular emphasis on convex optimization, including the analysis of shuffling stochastic gradient methods. Recently, he has also been looking into theoretical and algorithmic problems in large language models, including length generalization and speculative decoding.

His work has been published at leading international machine learning conferences, including ICML (with an oral presentation), NeurIPS, and ICLR. He has also served as a reviewer for these venues and received a Top Reviewer Award. Jaeyoung earned his MSc in AI from KAIST and his BSc in Cyber Security (major, summa cum laude) and Mathematics (minor) from Korea University.

Presenting: Shuffling SGD in Convex Optimization: History, Limits, and Small-Epoch Convergence

Shuffling SGD, also known as without-replacement SGD, is one of the most widely used methods for finite-sum optimization in practice. Unlike with-replacement SGD which samples componentfunctions in an i.i.d. manner, without-replacement SGD processes all component functions once per epoch in a shuffled order. Despite its practical importance, the theoretical analysis of shufflingSGD is considerably more challenging than that of with-replacement SGD, and several important questions are still open. In this talk, I will review the history of the theoretical study of shuffling SGD in convex optimization and present some of my own results. The talk is organized around two themes. First, I will discuss the convergence rates of shuffling SGD under different permutation strategies. Second, I will discuss convergence in the small-epoch regime, which is especially relevant to modern learning problems but is still much less understood than the classical large-epochsetting.